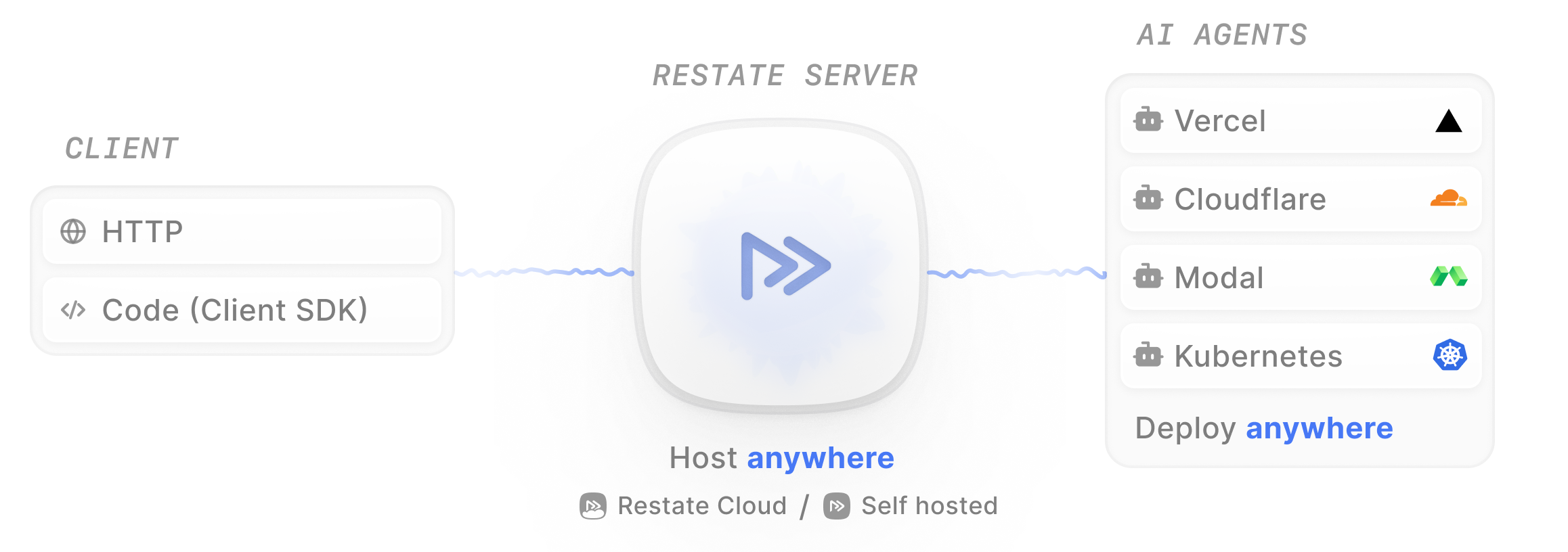

A Restate AI application has two main components:Documentation Index

Fetch the complete documentation index at: https://docs.restate.dev/llms.txt

Use this file to discover all available pages before exploring further.

- Restate Server: Sits in front of your agents and takes care of orchestration and resiliency

- Agent Services: Your agent logic using the Restate SDK for durability

Creating a durable agent

Follow the Agent Quickstart to run a durable agent end-to-end. A Restate agent has three building blocks:- The handler: An HTTP handler containing your agent logic, exposed in a Restate service

- LLM calls: Persisted so responses are not re-fetched on recovery

- Tool executions: Wrapped in durable steps so side effects are not duplicated

Observing your agent

The Restate UI (http://localhost:9070) shows the step-by-step execution trace of your agent, with detailed traces of every LLM call, tool execution, and state change:

Learn more.

How durable execution works

When your agent runs, Restate records each step’s result in a journal. If the process crashes mid-execution:- Restate detects the failure and restarts the handler

- Completed steps are replayed from the journal (no re-execution)

- Execution resumes from the first incomplete step

- LLM calls are not repeated (saving cost and time)

- Tool side effects are not duplicated (no double bookings, no duplicate emails)

- Multi-step workflows recover their full progress automatically