Combine Restate and Langfuse to get full observability into your agent executions. Langfuse traces every LLM call, tool invocation, token usage, and cost. Restate traces every durable step, so you see agentic steps alongside regular workflow steps in a single trace. You don’t need to change your agent code. You only add the Langfuse instrumentation to your entry point.Documentation Index

Fetch the complete documentation index at: https://docs.restate.dev/llms.txt

Use this file to discover all available pages before exploring further.

Langfuse Documentation

Learn more about Langfuse’s observability, prompt management, and evaluation features.

Instrumentation setup

Select your Agent SDK:What you see in Langfuse

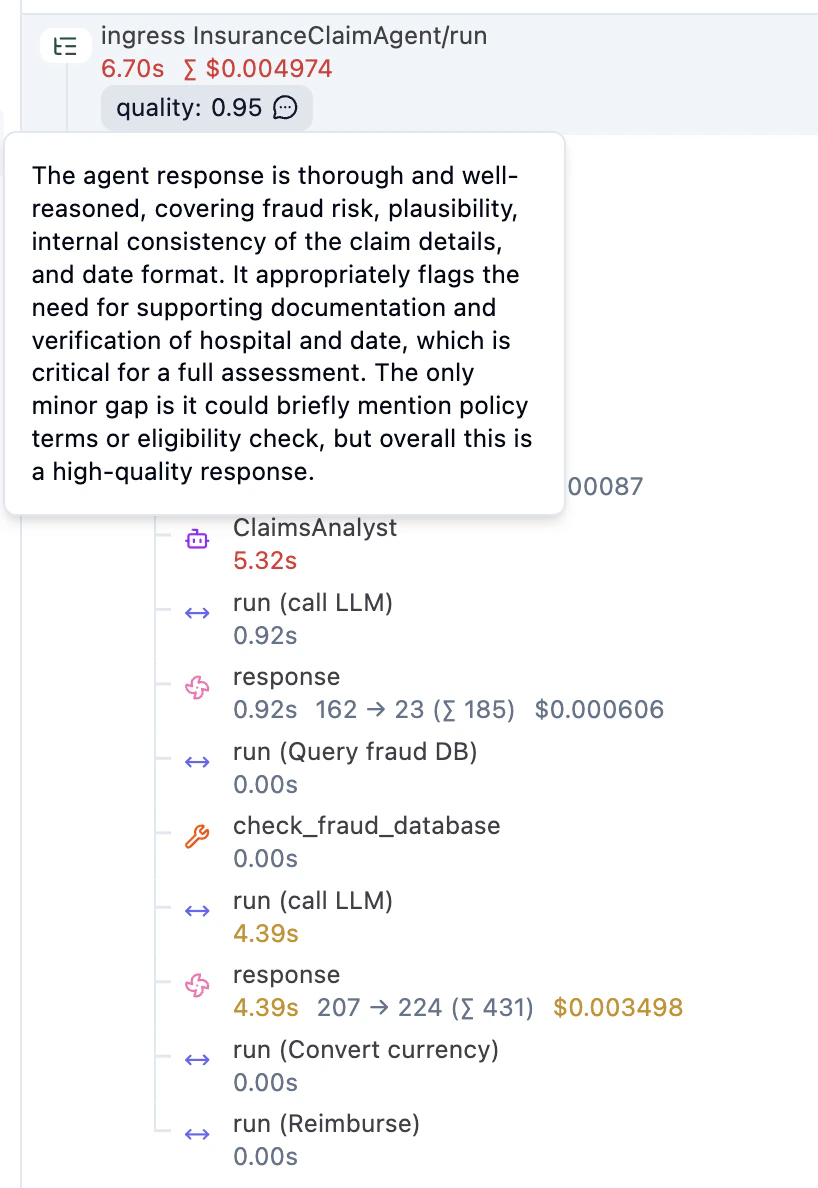

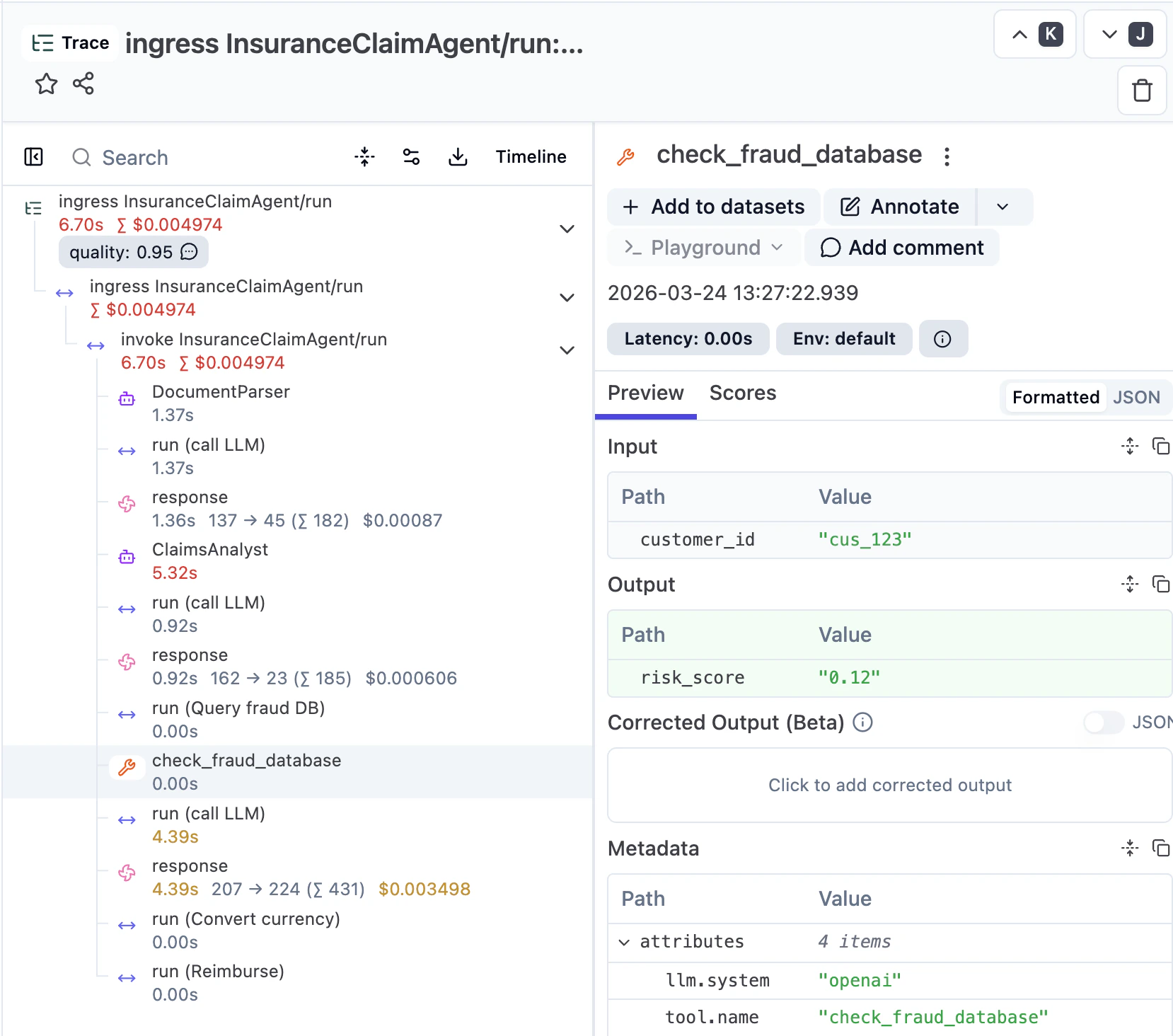

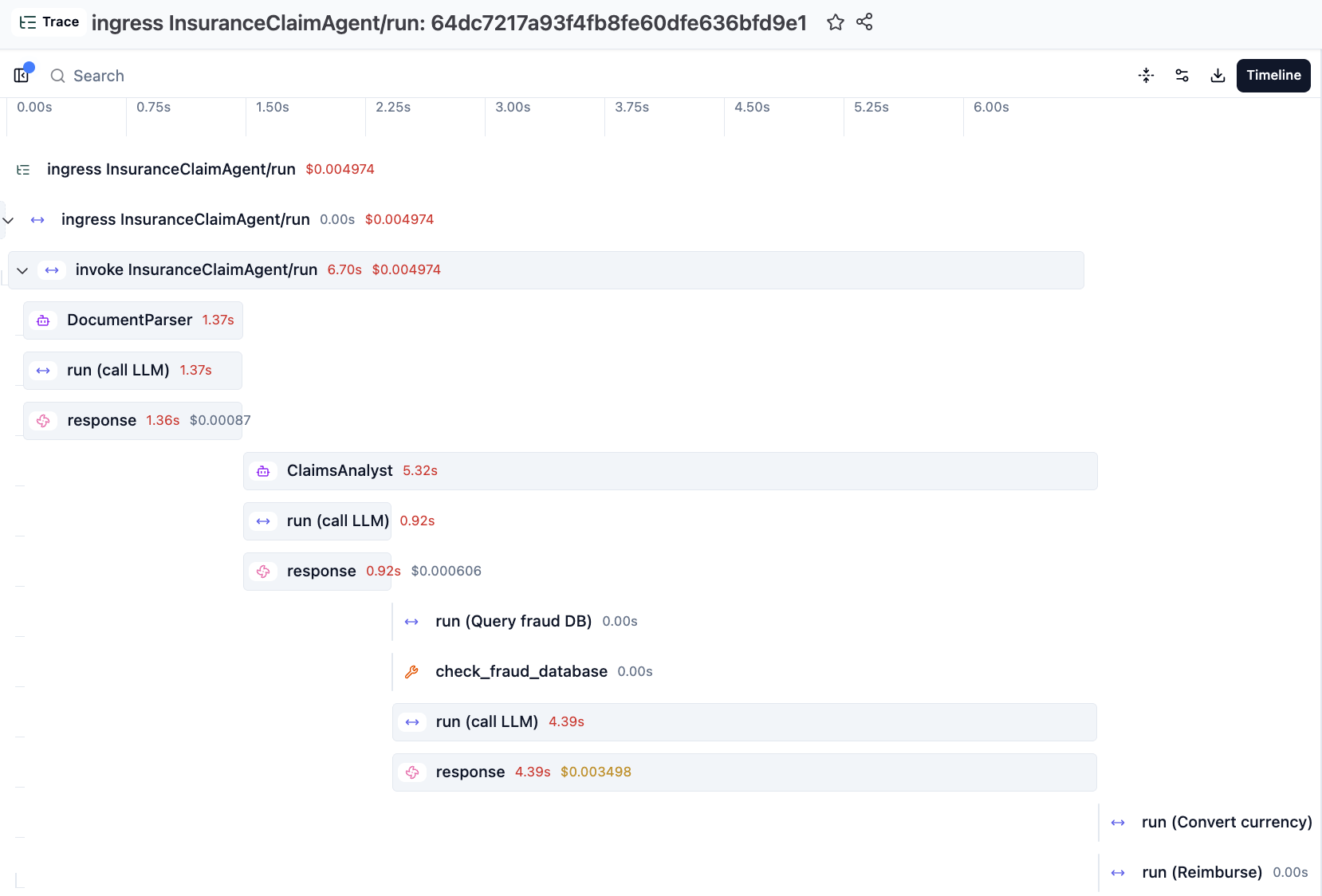

Once you send a request, you can inspect the trace in Langfuse. You see the agentic steps (LLM calls, tool invocations) alongside regular workflow steps (e.g. currency conversion, reimbursement), with inputs, outputs, model configuration, and token usage for each LLM call. Restate manages the execution, starts the parent span, and exports the full journal as OpenTelemetry traces. The Langfuse SDK attaches AI-specific spans and metadata under Restate’s parent span.

Restate’s Tracer Provider flattens the Langfuse spans to make them appear consistently structured with the Restate spans in the UI.

We are working on a next iteration of the integration which will respect the Langfuse span nesting and puts the Restate spans at the right depths inside them.

Going further

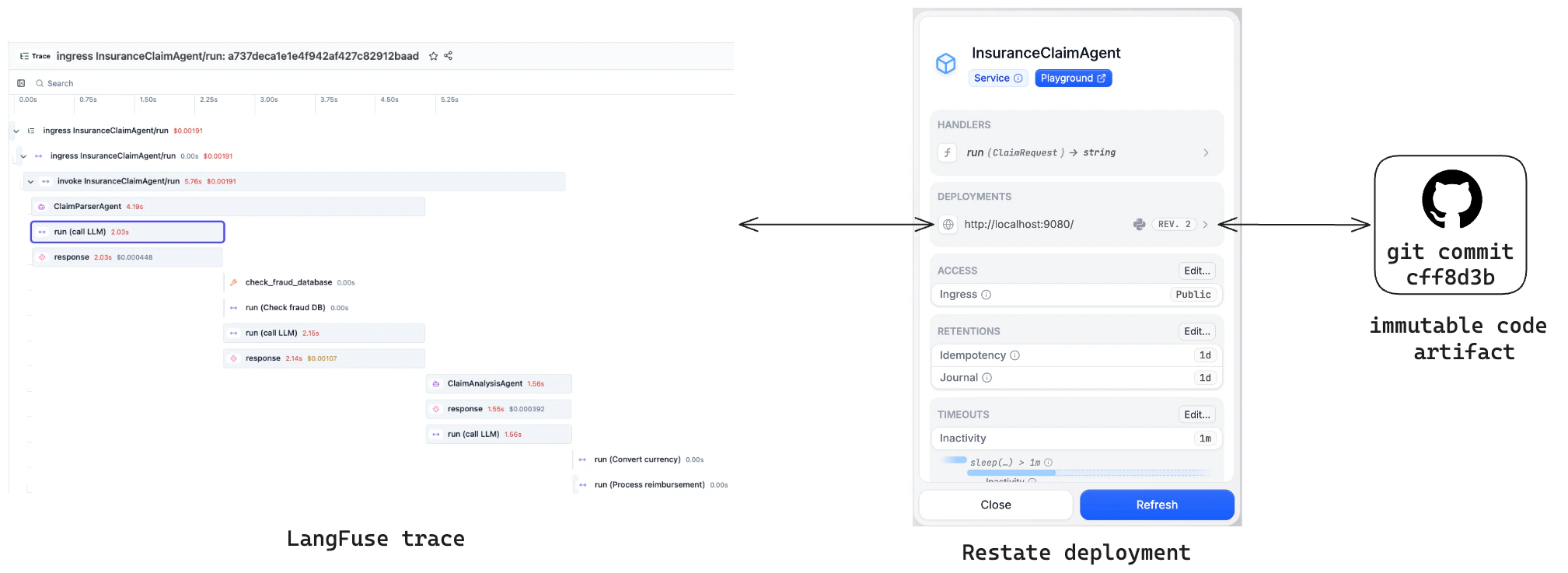

Langfuse and Restate complement each other beyond basic tracing:Versioning

Restate’s versioning model ensures each trace is linked to a single immutable code version. Compare quality across versions in Langfuse and spot regressions.

Prompt management

Fetch version-controlled prompts from Langfuse as a durable step withctx.run. Retries reuse the same prompt; new executions pick up the latest version.

Async evals example

Run LLM-as-a-Judge evaluations as async Restate workflows that don’t block agent execution. Restate acts as both the queue and the orchestrator. See the example below. You can submit an evaluation from the agent via a one-way call, so it runs asynchronously without blocking the main agent. The eval workflow runs an LLM judge and writes the score back to the original trace in Langfuse:evaluation.py